Law Sites. ..

Last week, I reported here that researchers at Stanford University planned to augment a study they released of generative AI legal research tools from LexisNexis and Thomson Reuters, in which it found that they deliver hallucinated results more often than the companies say in their marketing of the products.

The study came under criticism for its omission of Thomson Reuters’ generative AI legal research product, AI-Assisted Research in Westlaw Precision. Rather, it reviewed Ask Practical Law AI, a research tool that is limited to content from Practical Law, a collection of how-to guides, templates, checklists, and practical articles.

The authors explained that Thomson Reuters had denied their multiple requests for access to the AI-Assisted Research product.

Shortly after the report came out, Thomson Reuters agreed to give the authors access to the product, and the authors said they would augment their results.

That augmented version of the study has now been released, and the Westlaw product did not fare well.

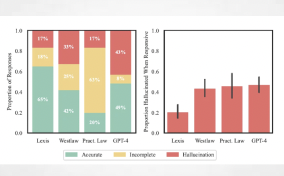

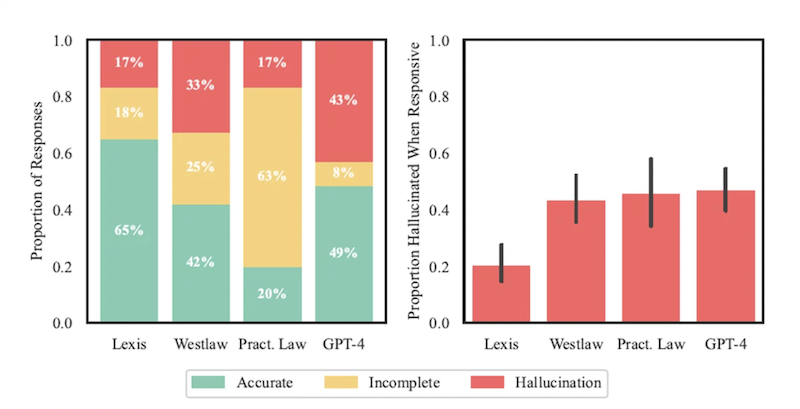

While the study found that the LexisNexis generative AI product, Lexis+ AI, correctly answered 65% of their queries, Westlaw’s AI-Assisted Research was accurate only 42% of the time.

Worse, Westlaw was found to hallucinate at nearly twice the rate of the LexisNexis product — 33% for Westlaw compared to 17% for Lexis+ AI.

“On the positive side, these systems are less prone to hallucination than GPT-4, but users of these products must remain cautious about relying on their outputs,” the study says.

One Reason: Longer Answers

One reason Westlaw hallucinates at a higher rate than Lexis is that it generates the longest answers of any of the products they tested, the authors speculate. Its answers average a length of 350 words, compared to 219 for Lexis and 175 for Practical Law.

Read full article